The Real Reliever Problem

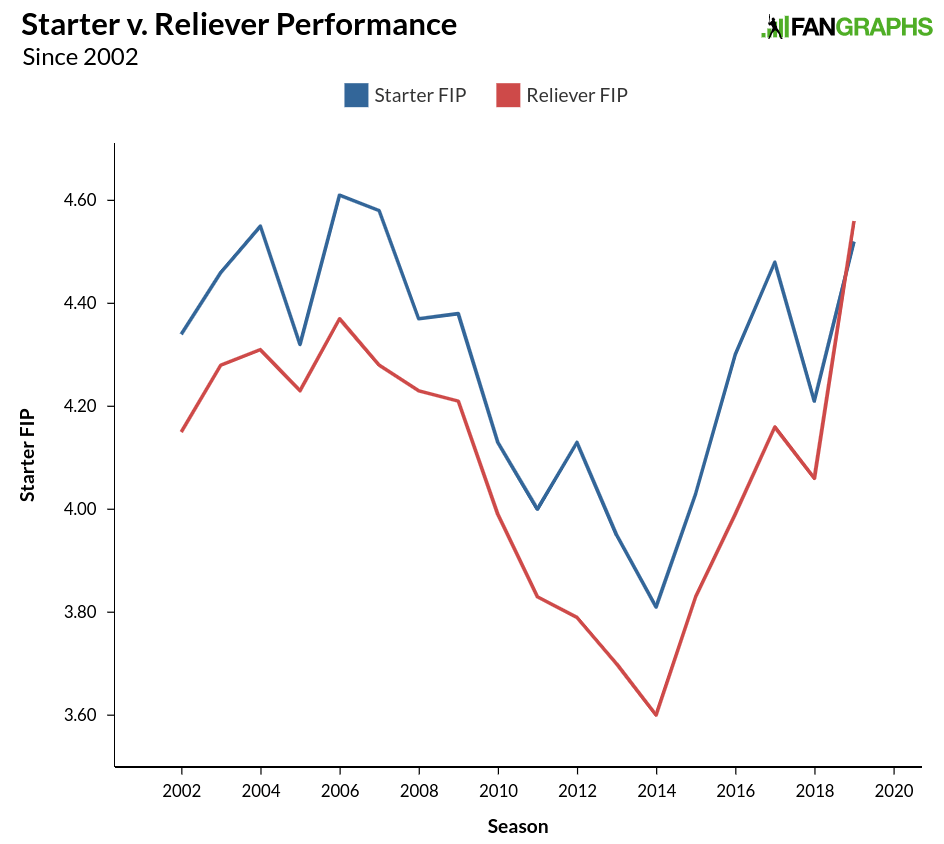

With so many variables, isolating specific trends in baseball can be tricky. Relievers have been pitching more and more innings. Strikeouts keep going up. The ball has been juiced, de-juiced, and re-juiced, making home run totals hard to fathom and difficult to place in context, both for this year and for years past. One noticeable aspect of this season’s play, influenced by some or all of the factors just listed, is that relievers are actually performing worse than starters. Our starter/reliever splits go back to 1974, and that has never happened before. Here is how starter and relievers have performed since 2002:

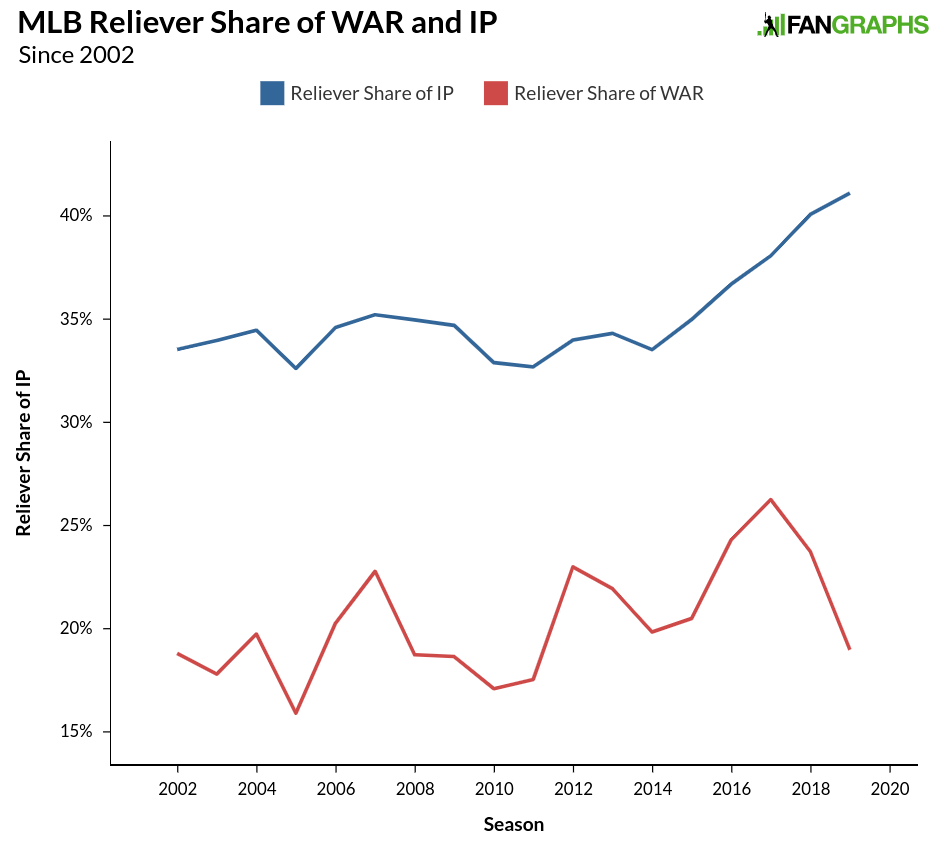

A healthy gap between the two roles has existed for some time, but seems to have taken an abrupt turn this year. Ben Clemens looked at the talent level between starters and relievers earlier this season in a pair of posts that discussed how starters are preparing more like relievers, as well as the potential dilution of talent among relievers. The evidence seemed to point toward the latter theory, though exactly how that dilution has affected performance comes in a rather interesting package. Providing some evidence for the dilution effect is the number of innings handled by relievers in recent seasons. While the idea of starters pitching better than relievers is a new one statistically, the trend of increasing reliever innings likely made this year’s change possible. Below, see the share of reliever innings and reliever WAR since 2002:

The share of innings taken by relievers has gone up rather sharply since 2015, when it was around 35%, to this season’s 41%. While the reliever share of WAR went up in 2016 and 2017, last season saw the share go back to 2016 levels and this season, reliever WAR is roughly the same as it was from 2002-2015. More innings has not meant greater efficacy; indeed, increased exposure has resulted in a decline in overall quality from relief pitchers.

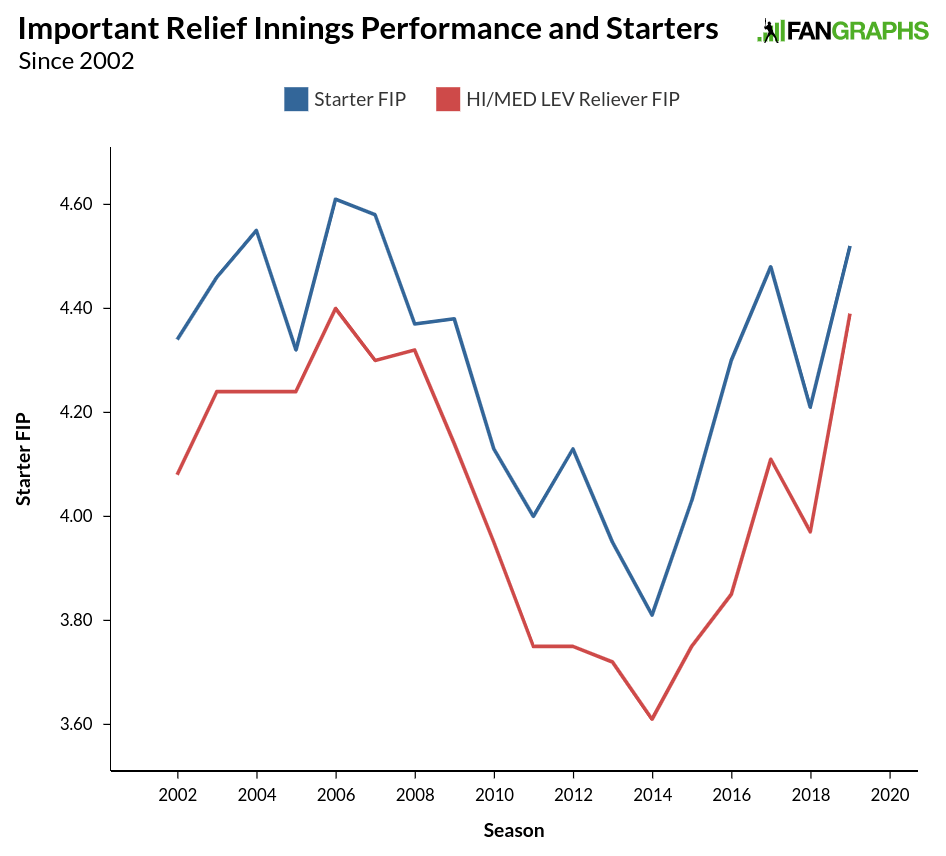

We might assume that the overall quality of relievers has decline top to bottom, but that hasn’t been the case. If we assume that managers are generally optimizing their bullpens and pitching good relievers in important situations (and bad relievers in unimportant situations), we can look at performance by leverage index to see where the gains and losses in effectiveness have been made. The graph below shows reliever performance in high and medium leverage situations compared to overall starter performance:

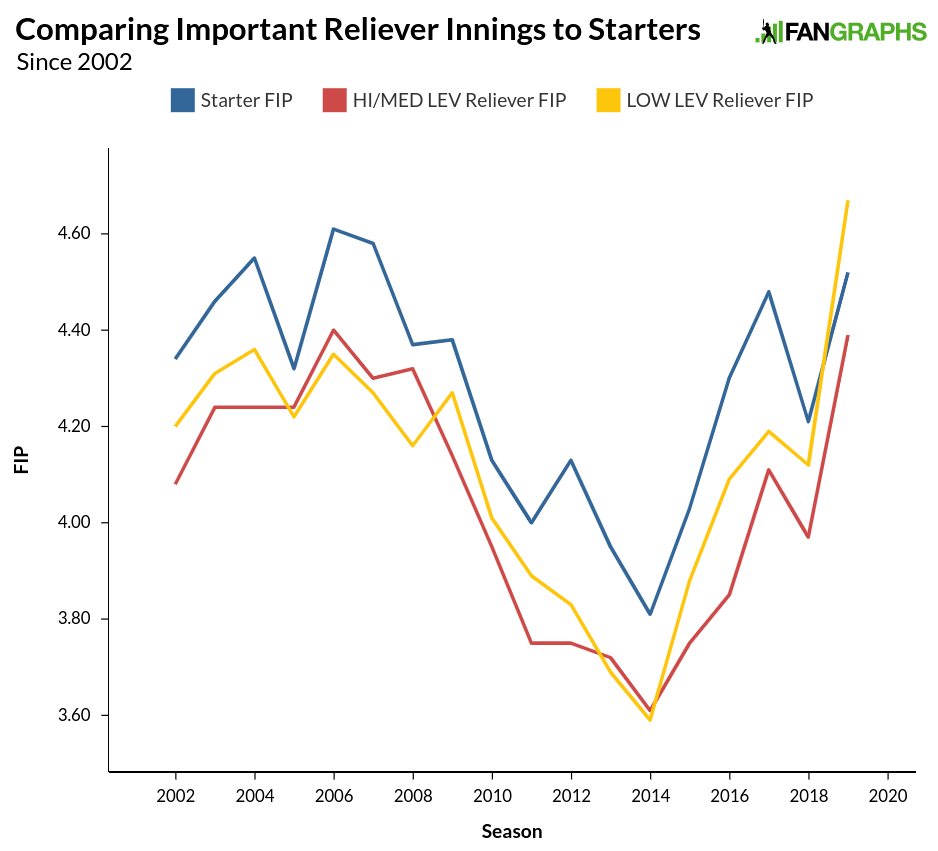

When we compare only the relievers we presume to be the better ones, we see a performance in line with previous seasons. The gap is smaller this season than in years past, but it is still evident. Here’s what happens when we add in low-leverage performances:

Much of what we saw prior to 2015 was simply managers using their bullpens sub-optimally. The pitchers pitching in less important situations were almost exactly as good the pitchers trying to get outs in moments that mattered. Either all relievers were the same, or good relievers were pitching too much when the game wasn’t on the line, while poor relievers were taking on too many hitters with the outcome of the game yet to be decided. Since 2015, we’ve seen a small gap in performance between the two different situations, with an explosion this season as the 0.28 difference in FIP almost doubles last season’s difference and the average since 2015, both of which were 0.15.

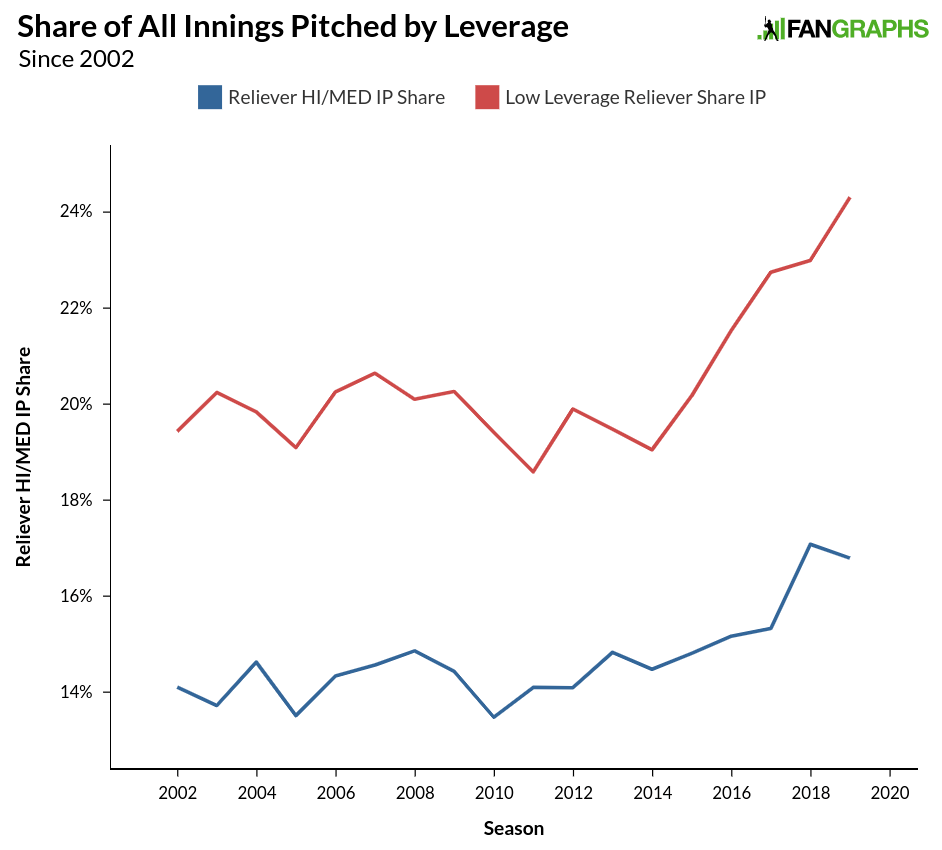

These differences are exacerbated when we consider the number of innings being covered by the groups above. We know that the reliever innings have shot up. Here’s the breakdown between low-leverage situations and medium- and high-leverage situations:

Over the past five seasons, medium- and high-leverage situations have gone up by about two percentage points. Low-leverage situations have shot up at twice that level, increasing by about four percentage points. Not only are the worst relievers performing at a lower level than their peers compared to past seasons, they are also pitching a lot more.

When we consider the issue of reliever usage and performance, we generally aren’t talking about taking out the starting pitcher who used to finish a game, but now only goes seven innings. Those relievers are still getting the job done, at least relative to starter performance. We aren’t even talking about a starter being removed after five innings in a close game and asking the bullpen to get the last 12-15 outs. When those situations occur, relievers are still performing better than starters, just as they have in year’s past.

Where baseball might have a reliever problem is when the game is 6-1 in the fourth inning. Managers are no longer asking their starters to soak up innings, and they aren’t going to use one of their better relievers when the team doesn’t have a great chance of winning. There are some positive aspects to this strategy, as it is possible teams are doing a better job — or at least trying to do a better job — at preserving the arms of young pitchers, and that goes for close games and blowouts. The incidence of Tommy John surgery is down the last couple of years from where it was five years ago, and it has been down considerably so far this season. That might be a blip, but it could be a sign teams are collectively turning a corner when it comes to pitching injuries.

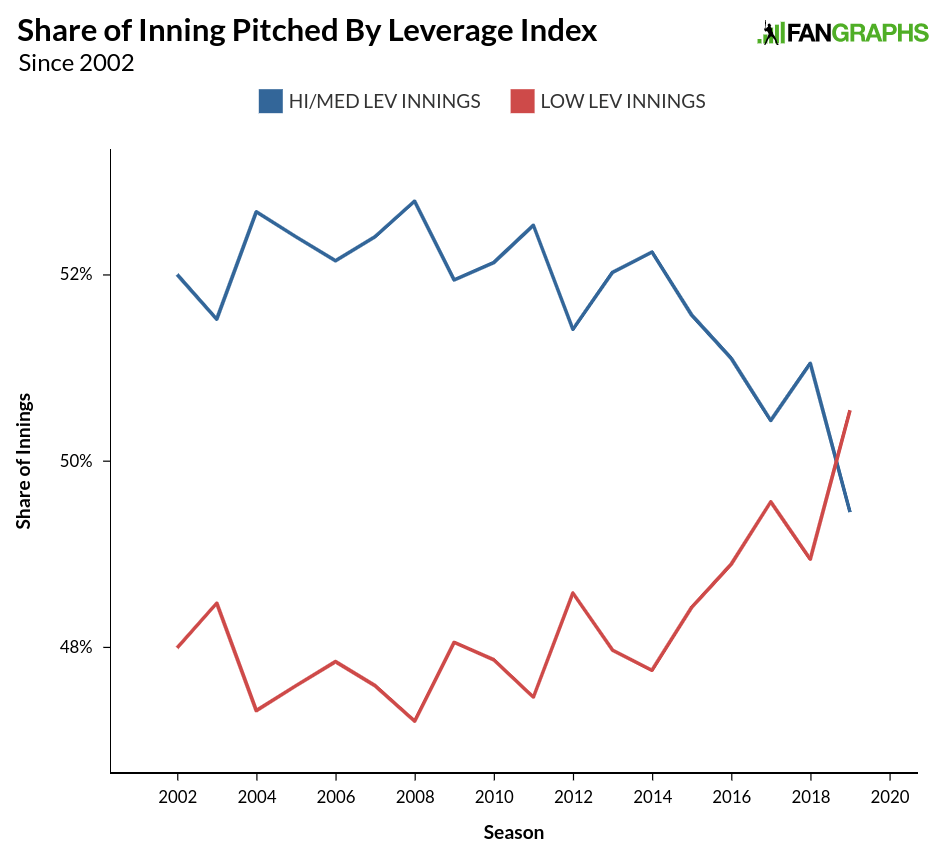

As for the bad, we are seeing a lot more non-competitive innings. The graphs above aren’t too concerning, but this next one should be. Below is the overall percentage of innings pitched with a high- and medium-leverage index compared to a low-leverage index:

For the first time since at least 2002, there have been more low-leverage innings pitched than any other type. The two percent drop over the last few seasons is the innings equivalent of 50 full games in a season going from interesting to dull. Some of this might come from managers more optimally using their bullpen, thus failing to make a contest of all nine innings so they can preserve their team’s players for when the club has a better chance at winning. Some of it probably has to do with the all of those home runs, which result in blowouts and would be happening regardless of bullpen usage. The biggest culprit, though, is likely competition, or lack thereof. The less parity (and higher scoring) there is in the sport, the more non-competitive games result. This season, the top-to-almost-bottom competitive National League still has a majority of innings pitched in medium and high leverage situations, but in the American League, with less competition and a more top-and bottom-heavy setup, nearly two percentage points lower in terms of leverage.

We might be reaching an equilibrium in terms of reliever and starter performances, but in closer games, we still see a normal disparity between starters and relievers even as relievers have taken on more innings. The low-leverage reliever innings are bringing overall reliever performance way down. While a dilution of talent might be somewhat responsible for this change, the real issue is a lack of competition. Competitive teams (or a lower run-scoring environment) lead to competitive games, and putting a better product on the field for all 30 teams would mean more exciting play for everyone.

Craig Edwards can be found on twitter @craigjedwards.

Really interesting stuff, Craig. Thanks!

Was there any effort to isolate opener/first man from the data?

Great question as you’d think this could be a big contributor to the increasing share of IP going to relievers.

What stadium is in your profile picture?

The stadium in the profile pic looks like Ft Wayne, Indiana